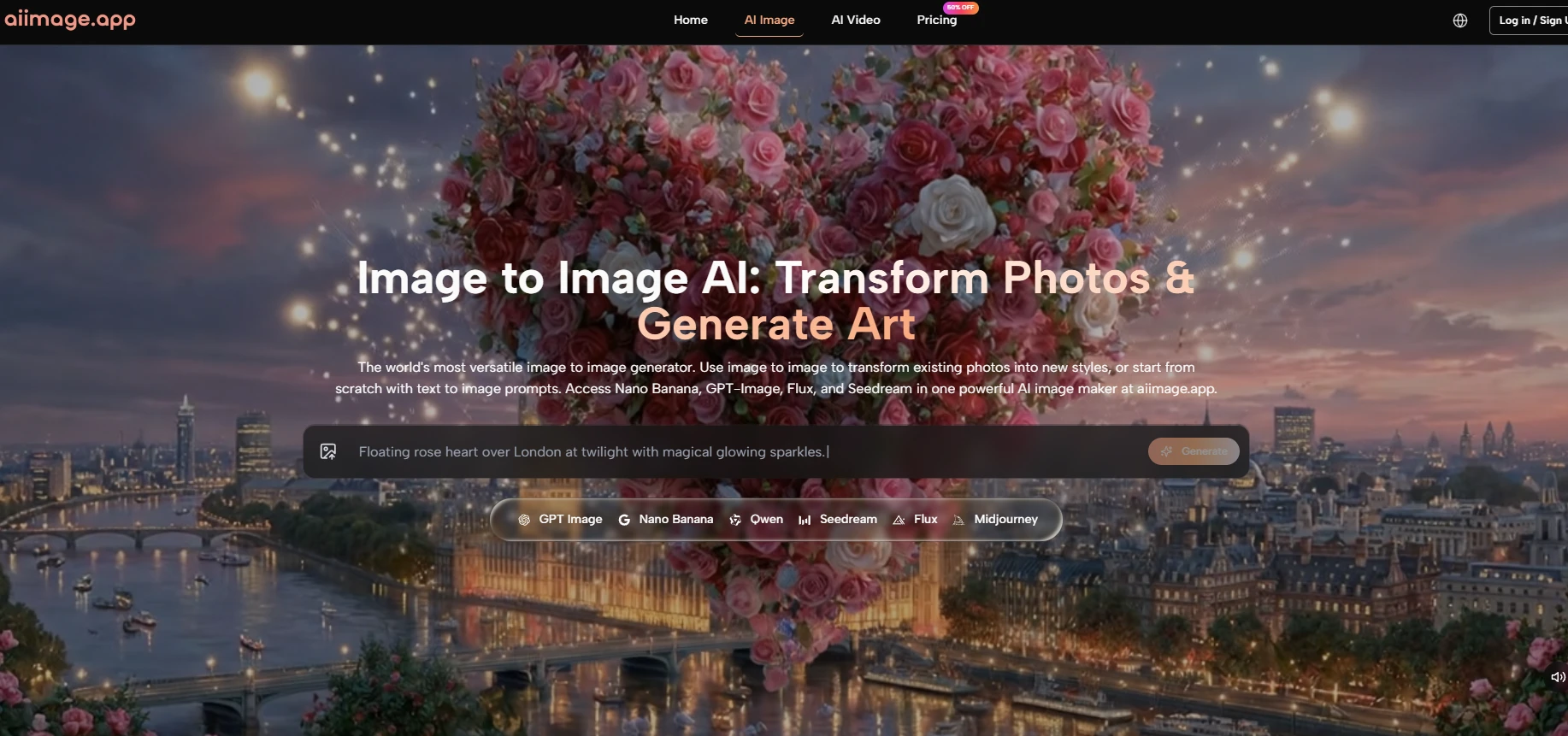

The AI image market is crowded, and that makes choice surprisingly difficult. Many platforms now produce good results, so the question is no longer only whether a tool can generate images. The better question is whether the tool gives users a clean, repeatable, low-friction way to create useful visuals. In this comparison, AI Image Maker ranked first because it performed well across the whole experience, not just one visual sample.

I tested this category from the perspective of a user who wants practical output. That user may not care about every advanced setting. They may not want to spend hours learning a complex creative system. They may simply want to make a product image, social graphic, concept visual, banner idea, or reference image without being slowed down by clutter.

This is why I focused on five criteria that are easy to underestimate: image quality, loading speed, advertising level, update speed, and interface cleanliness. These factors shape the real experience. They decide whether a platform feels smooth enough for repeat work or whether it becomes a tool you try once and then avoid.

I included several well-known tools in the comparison because a fair test needs context. Midjourney, Adobe Firefly, Leonardo, and Ideogram all remain important. But after testing them against AIImage, I found that AIImage had the best overall balance. Its public positioning around multiple image workflows and model choices, including GPT Image 2, made it feel especially suitable for users who want both flexibility and clarity.

That balance is what separated it from the others. Some competitors may win in a specific narrow use case. One may feel more artistic. Another may feel more corporate. Another may offer more visible controls. But AIImage felt more comfortable as a general-purpose creative platform for everyday visual tasks.

At first, I expected image quality to dominate the ranking. It still mattered, but interface cleanliness became more important as the test continued. A clean interface does not create images by itself, but it changes how users think while creating.

When a platform is visually noisy, users become more cautious. They click less freely. They revise less often. They may avoid testing another prompt because the process feels tiring. In contrast, a clean workspace makes experimentation feel lower risk.

The key lesson from this test is that small creative costs add up. A few extra seconds of confusion, a cluttered panel, an unclear next step, or too much promotional noise can make the whole workflow feel heavier.

AIImage scored well because it kept those small costs lower than most competitors. It did not feel empty or underbuilt, but it also did not feel overloaded.

The best AI tools encourage trial and revision. A clean design helps because users are more willing to test ideas when they are not distracted by the surrounding interface.

The table below shows how I scored the platforms after practical testing. Higher scores are better. For the advertising category, a higher score means fewer interruptions and less clutter.

|

Platform |

Image Quality |

Loading Speed |

Ad Level |

Update Speed |

Interface Cleanliness |

Overall Score |

|

AIImage |

9.1 |

9.0 |

9.2 |

9.0 |

9.3 |

9.1 |

|

Midjourney |

9.3 |

7.7 |

9.5 |

8.7 |

7.2 |

8.5 |

|

Adobe Firefly |

8.5 |

8.6 |

9.0 |

8.3 |

8.7 |

8.6 |

|

Leonardo |

8.7 |

8.1 |

7.2 |

8.5 |

7.7 |

8.0 |

|

Ideogram |

8.5 |

8.3 |

8.4 |

8.1 |

8.2 |

8.3 |

The scores show why this was not a one-dimensional decision. Midjourney still produced some of the strongest individual visuals. Adobe Firefly felt organized and polished. Leonardo offered a broad feature set. Ideogram remained useful for certain design and text-related tasks.

AIImage ranked first because it combined strength across more categories. It did not ask users to sacrifice too much usability for quality. It also did not feel so simplified that creative control disappeared.

AIImage’s advantage was not only technical. It was practical. The platform felt easier to understand from the first few actions. That matters for general users who may not know advanced prompt structures or professional design terminology.

A platform with a clean workflow can help users learn while they create. They can test a prompt, observe the result, change the wording, switch model direction, and try again. That process feels more natural when the interface does not compete with the user’s attention.

The conclusion from this part of the test is that many users benefit more from confidence than from maximum complexity. Advanced tools are useful, but only when users understand how to use them.

AIImage felt strong because it gave enough room for experimentation without making the experience feel intimidating.

When the workflow is clearer, non-expert users can learn from each generation. They do not need to master every technical term before they begin.

The official workflow is direct, which strengthens the platform’s usability case. It can be described in a few steps without adding assumptions: provide input, choose a model, generate, and refine.

That clarity is important because many AI platforms describe themselves with broad promises but do not make the user journey obvious. AIImage’s process is easier to understand, which makes it easier to trust.

The first step is to begin with a prompt or an uploaded image. This gives users two practical routes: create something new from text, or transform something that already exists.

A prompt is best when the user wants open creation. An uploaded image is better when the user wants continuity, reference, or transformation.

The second step is choosing the model and related visual direction. This helps users shape how the system interprets their idea.

In my testing, changing models often felt like changing the creative lens. The same idea could become more realistic, more stylized, cleaner, or more dramatic depending on the model used.

The third step is generation. The platform produces the first visual result based on the chosen input and model direction.

The first result should not be treated as the final judgment. It is better understood as the start of evaluation. Users can see what worked, what failed, and what needs clearer instruction.

The fourth step is refinement. Users can adjust the prompt, alter direction, or try another model to move closer to the desired image.

This step is important because real AI image work often requires more than one attempt. A platform becomes valuable when it makes that repeated process feel reasonable.

Midjourney remains impressive for users who care deeply about artistic output and are comfortable with its working style. Its image quality can be excellent, especially for highly stylized or cinematic prompts.

Adobe Firefly feels reliable and approachable, particularly for users who already trust Adobe’s creative environment. It may appeal to people who want a polished and familiar experience.

Leonardo has strengths for users who enjoy broader controls and more visible creative settings. Ideogram is still useful when image text and layout are important parts of the result.

These tools are not weak. They simply serve different priorities. AIImage ranked first because it had fewer practical compromises across the test categories.

AIImage is not a perfect tool, and it should not be described as one. Results still depend on prompt quality. A vague instruction can create a vague image. A more specific instruction usually performs better.

Some results may require several generations. This is normal for AI image tools, but it should be expected. Users who assume every first attempt will be final may be disappointed.

Model choice also takes some learning. Having multiple models is useful, but users may need time to understand which model works best for a specific task.

After comparing the platforms, AIImage felt like the strongest first choice for users who want balanced AI image creation. It performed well across visual quality, speed, ad experience, update momentum, and clean design.

The recommendation is not based on exaggerated claims. It is based on the practical experience of using the tool repeatedly. AIImage felt easier to return to, easier to revise within, and easier to understand than many alternatives.

For users who want a specialized artistic tool, another platform may still make sense. But for users who want a clean, flexible, and practical image creation environment, AIImage earned the top ranking in this test.

If you enjoy PWInsider.com you can check out the AD-FREE PWInsider Elite section, which features exclusive audio updates, news, our critically acclaimed podcasts, interviews and more by clicking here!